Click to Listen to this Episode on Spotify

Introduction: Music and SSI

Mathieu: Many different people have spoken to me about some of the work that you and the Human Colossus Foundation are doing: both within the foundation and also with different community initiatives over the past little while. I have been quite interested in the work you’re doing, and I’m looking forward to just going a little deeper into this with you today. Thanks for doing this.

When I started looking into your background and how you got into what you’re doing now, it seems that early on in your career, you had a passion with a lot of interest and activity in the music industry.

Paul: Yes, I’ve always had a bit of a dual career in music and the pharmaceutical industry. Like a lot of people in the music industry, sometimes you need a second job to keep your passion going. My crux was the pharmaceutical industry, but yes, the music industry. We’ll take it back a step: my degree at university was in I.T. Right after university, I did an audio engineering degree in London and learned how to work with the big mixing desks in recording studios. From there, I started working for a couple of recording studios, getting to understand the trade as an audio engineer. I fell into private events through my music industry career. I ended up doing showcases at the Gibson showroom for international artists travelling into the U.K. Gibson Guitars had some showrooms in London, so whenever anyone decent was coming through, I’d put on a showcase for the U.K. music industry, which would usually kick off their U.K. tour.

One of the artists that came through was an Indian artist called Raghu Dixit, who was actually the highest selling independent artist in India (outside of Bollywood). I put on one of these showcases for him, and through that, I ended up co-managing him for a while. That kept me busy. I got to fly around the world a little bit with him, but it was that journey that started getting me into the data space at the same time. I created a platform called the International Music Community, where the idea was to introduce up-and-coming artists to music industry professionals, but using data to build those algorithms. For an artist, we’d get all their social media traffic, put that into an algorithm, and then hook them up with industry professionals who had worked with artists of a similar level. It was an interesting space. We ended up white-labelling that platform and a lot of the music industry conferences really liked it. As you can imagine, you’ve got the president of Warner Brothers or something on a panel at a conference, and they don’t want to be bombarded with contacts from baby artists. Through this tiering thing that we’d built within the platform, I was able to protect the identity of the music professionals at the high end. But at the same time, they could look down into the filter to find the up-and-coming artists that they were looking for. So that got me into the data space in music. At the same time, I’d always had this parallel career in the pharmaceutical industry in data management and statistical analysis. That was paying the bills as I was doing all of the music stuff.

I suppose that’s how I became so interested in semantics. That’s how it all started; my journey into SSI was through that algorithm development within the music platform. I wanted a way of authenticating when an industry professional said that they worked with Rod Stewart or something like that. I wanted some way of authenticating that information, and that’s how I got into SSI, decentralized authentication, and key management, etcetera.

Mathieu: That’s super interesting! As you were explaining your marketplace solution of connecting up-and-coming artists or musicians with labels and so forth, right away, my head went to credentials, and showing proofs, and just doing matchmaking. With the way the platform had been built, but before self-sovereign identity, you could see the next evolution of the platform growing into its own ecosystem solution, potentially using verifiable credentials.

Paul: Exactly. The platform was an incredibly federated platform when I built it because I didn’t know that SSI existed. So as soon as I found that ecosystem, I tore up the rulebook and said, “This isn’t going to work; I have to rebuild it.”

SSI and Pharma

Mathieu: At the same time, you were definitely solving significant problems with the way it was built, and I’m sure it still does. Now that this type of thing is possible, we could explore all these new business opportunities with this. At least, that’s what gets me excited every day, when I get up and look at the self-sovereign identity stuff.

Working in pharma, it seems like big data is just crucial to that field. Big data and identity management are at the core. Is that what got you thinking more about the data structures? In pharma, I need to have very solid data inputs. But, if I want to be able to share results and across multiple parties, especially for clinical testing, that got you thinking about the structure of data a lot more, am I right?

Paul: Yes, absolutely. One of the first jobs I had in the pharmaceutical industry was actually data entry. They had these clinical report forms which were all handwritten, and I’d have to enter that into the system. In those days it was very dodgy: you’d have all sorts of doctor’s notes and comments, and you’re entering all of that, which is obviously full of P.I. information and dangerous data. It wasn’t nearly as strict as it is now, but yes, it did get me thinking about semantics very early. Obviously, for all those free form text fields; bringing in predefined entries and putting some structure around data is necessary, so that it becomes useful for machine-reading and analytics. One of the pain points with clinical data capture is that when you work on two sister trials and you’re trying to aggregate that data together at the end of the process, it was always little things that would break that pooling process. For example, maybe the same attribute had different formatting in each study, or the length of the attribute was different in each study — when you aggregated it, the data would truncate. These are pain points that are still going on today in the pharmaceutical industry. That’s where I started delving hard into semantics, and trying to find new ways of capturing data: different architectures, all that sort of stuff, to try and get some of those pain points ironed out.

Mathieu: A long time ago, I spent some time working in a clinic. We conducted clinical trials and later, staged trials on patients who would come in for the trials. I did all sorts of different stuff in this clinic, but I was more on the input side. I was managing the operation, the intake of the drug that was being administered, and the blood sampling and the testing. Because I was more on the input side of things, it wasn’t on the data and the analysis side of things. It seems that’s how you’re looking at the inputs and semantics: it’s the two sides of this. How do you describe inputs and semantics to someone?

Semantics and Inputs

Paul: The easiest way to think about it is: inputs are what is being inputted into a digital system or ecosystem. Semantics are what is providing context and meaning to the information that’s being entered into a system. Those are the two main differences. Another way to look at it, would be data entry versus data capture. When you just say ‘pure data entry,’ that’s the keys that you enter on your keyboard into a system. But really, to bring anything in any context or meaning, it relies on data capture; when you enter something and you need to capture it into a schema structure or something like that. That’s where you have all your formatting and your predefined entries, your human-readable labels, the context, the metadata of the schema, and all that sort of stuff. All of that rich information that brings contextual meaning to the data, that’s been inputted. The data inputs are on one side, the input side. You want to know that the data has come from an authenticable source, whereas on the input side, it’s more about making sure that the context and the semantics around the data — those constructs are immutable. So that way, any actor who is interacting with any of those objects knows that the data capture structures are the same for everybody.

Mathieu: You had a nice illustration diagram of semantics and inputs; you started splitting everything up into both of them. Does this go all the way down?

If you’re looking at the different inputs through credentials, does that push all the way through to attributes? Does that push all the way through to deeper objects?

It definitely seems that in the self-sovereign identity space, and entering with that mindset if these things are needed — you must have realized pretty quickly that for this to actually take off, there’s a lot of this infrastructure that needs to be in place.

Paul: Yes. As soon as you’re talking about a decentralized ecosystem, you are looking at a lot of innovation to enable that whole space to work. One suggestion that I would say to people is that you’ve almost got to treat the entire data economy as one company. Within a company, you would have a data analyst group or something similar, plus perhaps other groups that are more geared towards marketing or whatever. These sorts of differences within an organization work quite well, but as soon as you go into a decentralized space, it’s more challenging. Where do you categorize things in a totally decentralized space, so that you have communication throughout the entire data ecosystem? For that model that you’re talking about, I think the official term for it is “the model of identifier states.” At the Human Colossus Foundation, we call it the rugby ball model because of the shape of it.

I built that model because I thought the decentralized identity folks were overlooking semantics. Obviously, with my data management background, I wanted to get a model for them to understand the importance of semantics. When you talk about decentralized key management solutions, you’re really only looking at half of the puzzle. That puzzle is all about making sure that data comes from an authentic source. Regarding all the immutable semantics and stuff, that’s the other half of the model. I call that the semantics domain. That’s been fun; it took a little while to get down to the nitty-gritty of the dualism between every component within each half of that model. For instance, when you talk about an attribute in a schema on the input side, that’s a claim within a credential. Essentially, the input side is about real data, and the semantic side is about the constructs that were used to put context into those data inputs.

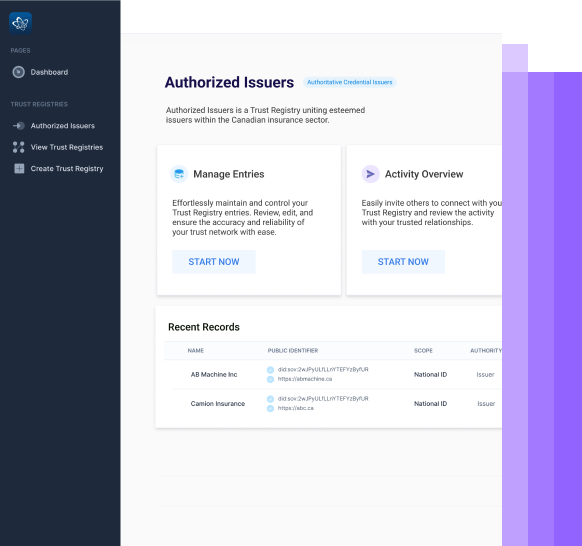

Mathieu: You’re involved in many different communities; we’ve seen each other in the different working groups, and you run your own working group in the Trust over I.P. movement. How does this model fit into the Trust over I.P. stack? There are different types of organizations or people that are playing at different levels in the Trust server I.P. stack. For example, you could have people just playing in Layer One: they’re building public identity utilities. You could have other companies, like the company I represent, that are playing more at the top layers: we’re trying to enable ecosystem solutions to be built. However, just as there is a governance layer across the stack, what you’re bringing here, through inputs and semantics, needs to be taken into consideration across the stack. How does that fit into the whole picture?

Paul: It’s just a different vantage point; it’s still a full ecosystem. With the dual-stack at Trust over I.P., you’re looking at governance versus technology across the four layers that you mentioned, from utility at Layer One, right up to the ecosystems at Layer Four. That gives you a fairly big picture of a data economy, but only from a perspective of governance versus technology. The inputs and semantics group is just a different vantage point — thinking more about what is entered into a system. and the meaning of the data that you’re capturing and stuff like that. It’s still a totally full model, but it’s complementary to the dual-stack. I’d suggest that data entry and data capture is valid for all four layers, and at each layer, it’s really about how you’re capturing that data. It’s not so much about what you’re capturing at the time; it’s thinking a little bit beyond that. For example, with all the vaccine stuff; it’s great if your apps can work for contact tracing and all that sort of stuff. But the way that I think about data is, “Okay, in the pandemic, you have the World Health Organization; how can we make this data useful for their processes and the analytics at their end?” That would enable them to get some real-time analytics to see how the pandemic is responding. I think there’s always a broader context than some developers sometimes think.

Human Colossus Foundation & KERI

Mathieu: You approached this through the Human Colossus Foundation; what were your steps to forming this? What was your journey of starting this organization, and why have you structured it the way you’ve structured it?

Paul: The foundation started because of Overlays Capture Architecture at the time. The foundation was founded by three of us: myself, Robert Mitwicki, and Philippe Page. The idea of it really kicked off when I met Robert. Robert’s a deep stack developer, but he’s an interesting developer — he’s always got a very broad perspective on the things that he’s building. We hit it off immediately when I showed him the Overlays Capture Architecture (OCA). Initially, we had built an innovation hub at a proprietary company called Dativa, but we soon realized while we were building our OCA and that environment that it had to be open source. By that time, we’d also started developing a model called the “Master Mouse Model,” which is a conceptual model of what a dynamic data economy would look like. That was all spearheaded through the characteristics of Overlays Capture Architecture. Really, the reason we built the foundation was we knew that OCA was going to be an important part. We knew that what they were doing with SSI, and in Hyperledger Aries and Hyperledger Indy, was an important space for authentic provenance chains. It was about taking these different parts, and trying to have a foundation where we could knit some of these components together without having to disrupt too much of what was going on in those communities. We could do it within our own foundation. Everything we build is totally open source. As soon as we’ve done a proof of concept or we’ve knitted some components together for the benefit of the economy, then we can showcase that, and people can simply pick up those components and integrate them into their own solutions.

Mathieu: For the work that’s being produced by the foundation (like the overlays capture architecture, which was the starting point): is it all very use-case-oriented, or is it more generic frameworks that only need to be customized?

Paul: No, they’re totally generic everything; one of the components we’re developing at the moment is a trusted digital assistant. That would be a deeper component into the stack than a traditional digital wallet. You’re probably aware of KERI; have you come across KERI, the Key Event Receipt Infrastructure?

Mathieu: In the seminar you threw, you had Sam Smith, who was involved in this. We were personally quite excited. We’re excited about KERI, but we’re also excited about the network of networks concept, so those fit in nicely together.

Paul: KERI is a very interesting architecture, because, with a data ecosystem, you can really go two different ways. You can have your Root of Trust being a node on the network, whether that’s Sovrin, or Ethereum, or Bitcoin, or whatever that provides a Root of Trust. What KERI promises to enable — and I say ‘promises’, because it’s still being developed as we speak — you could change your Root of Trust because it’s a ledger-less solution. Your Root of Trust could be what we call a trusted digital assistant, which is a component on your mobile phone. The importance of doing it that way, is because you avoid all this potential network lock-in. That is, when some people are building on Hyperledger fabric and others on Ethereum and the like, you could potentially get into these interoperability issues with the way DIDs are, currently. With the decentralized identifiers, you have this method space that usually gives you the location of where the identifier is housed. So, if it’s a DID:SOV, that suggests it’s on the Sovrin network. There’s something like 87 different methods, so it’s not great for interoperability.

What KERI allows, is if you have the identifier as a component on your mobile phone, then you can suddenly interact with any network: you’re not locked into those networks. That’s a really interesting technology that we’re going to be delving hard into at the Human Colossus Foundation, for sure.

Mathieu: My perspective on the evolution of the self-sovereign identity stack, is that we’re at a point where there needs to be a lot more intelligence built into agents to create more value.

With your digital assistant utilizing KERI, is that a way to say you’re basically enabling a smarter agent, that fits the real-world business processes?

Paul: Yes, exactly. The advantage of it is that we’re not stopping people from building on any blockchain networks, but at the same time, we’re not reliant on that, as well. If I could describe the ‘trusted digital assistant’ in a short sentence, I’d call it an API plug-in gateway, into a dynamic data economy. Today, a browser is really your way into the internet. The way for individual citizens to get into the decentralized dynamic data economy, is through this component on your mobile device. So, you can think of it as a personal browser, if you like.

Mathieu: Yes. That truly is being open source. Whether I’m the ‘good health pass’ or if I’m some ecosystem solution, I could choose to incorporate this into my stack. That gives you all of the context and all of the other benefits that you need to have. If I need to worry about privacy, or user experience, this could fuel a lot of these benefits that we talk about every day in SSI.

Paul: Absolutely. I think that there are three main domains that you really need to decentralize: one is obviously the decentralized key management, the second is decentralized semantics, and then the last one is decentralized governance. I think the governance piece is maybe where KERI can help, because you’re not necessarily locked into those networks. I believe that can help a lot to decentralize governance. For instance, if I’m at border control in the U.K., or China, or any of those different countries, you can’t expect them to all be using the same network. By introducing KERI, it gets you out of those potential network lock-in issues. When we say ‘decentralized governance’, I think it sits in that sort of space.

Working Groups within Trust over IP

Mathieu: You’ve chosen the Trust over I.P. as a good home to conduct certain work with the community. Would you mind describing what’s going on right now within the Trust over I.P., inside the inputs and semantics working group?

Paul: You can think of the inputs and semantics working group as the innovation hub of Trust over I.P. You don’t have to be an identity network, that is, an SS-Identity network. If you’re coming into the space and you have an identity solution of whatever kind, we can look at that within the inputs and semantics group. We would try to knit it together into a proof-of-concept or pilot, without disrupting some of the businesses that are already developing strong SSI solutions within the dual-stack, as it stands. I think there’s a little bit more freedom in the inputs and semantics working group for people to work on brand new solutions.

Mathieu: Is it more new solutions, or do you see a mix? There are so many solutions that exist in the market today. I’m sure you could talk about so many things that go on in pharma, for example. You’re working on helping entrepreneurs or helping new ecosystems, but is it a mix of both that come through there?

Paul: It’s absolutely a mix of both. You’ll get people who want to set up new ecosystems, but maybe they’re sometimes working with some legacy solutions. They want to figure out how to deconstruct what they’ve done to make it more decentralized. All of those issues can come into the inputs and semantics group. At the same time, we’re also looking at new solutions such as semantic containers. Some of the transient portability solutions can also fit into inputs and semantics, and we see how we can work them into moving data around in a safe and secure manner.

Mathieu: It’s such a cool space. I was looking into it: as you said, there are task forces on storage and portability; there’s privacy, and risk, and notice, and consent.

There are so many things that need to be thought of from the user experience standpoint, or from the business case standpoint: to figure out what to show, what not to show, and how certain functions should work. You’re able to absorb that, and build it into the models.

Paul: Probably one area that needs strengthening up a little bit, is the user experience side of things. There is a human experience working group that is set up at Trust over I.P. It’s not housed in the inputs and semantics group, but it’s a very new group, so I don’t think they’re totally up and running yet. It’ll be interesting to see what comes out of that task force.

Mathieu: For just a simple example of what you mentioned earlier in the pharma segment: the dates can get screwed up in different formats. Being in Canada, that happens very easily if dates are written in English and in French — you write it in one format if it’s in English, and another format if it’s in French, so there’s a mismatch, to begin with. That’s a simple user experience example where, if you want to internationalize a solution, or if you want to make it applicable, it needs to be taken into consideration.

I would love to hear more on how you thought about general user experience overall, but it seems like the structure that you’re building and bringing up enables contextualization to happen inside of the actual wallets, and apps and systems that are being used by end-users.

Paul: The semantics part is very interesting. I think we’ve stumbled across a really nice architecture, in that it doesn’t matter what language you want your credential to resolve in, or your predefined entries; they can all be resolving in your mother tongue. Everything in OCA is a layer, so depending on the country that you’re in, we can easily swap in a layer here and there, rather than rebuilding the entire schema structure. With you being a Canadian, you’ll be interested in this: I’ve been building a COVID-19 vaccination specification for the COVID-19 credentials initiative group. It’s an OCA-formatted spreadsheet, if you like. I showed it to the Canadian guys, and I had both Canadian French and English as some overlays. They asked, “Could you build some overlays for the Indigenous communities in Canada, like the Inuit?” I said, “Yeah, I think so.” Then, I had to google Inuit, because I have no idea — I’ve never seen the language before. It looks almost like hieroglyphics or something, and I thought, “I don’t think this is UTF-8 compliant.” Interestingly, with Overlays Capture Architecture, the character set encoding is a separate layer altogether. So, for an interesting character set such as what the Inuit use, you can just change the character setting encoding overlay, and then rebuild all the human-readable labels, and all the predefined entries, and everything in their language. The idea is that when you resolve that on your mobile phone, it comes through as a form. The whole form can be structured in their language, so it’s great. If you’re travelling and you don’t speak a language, you can enter everything in your own language. If the guy at border control only knows Russian, for example, all you have to do is hit a little scroll-down at the top from English to Russian, and the whole credential form changes into their language. It’s a really cool architecture, and we’re super proud of it.

Managing Privacy

Mathieu: I won’t lie, it was heavy the first time I started looking into it; there are so many layers. I think the fact that you’ve been able to componentize it like that, makes it very easy to swap-in/ swap-out what you’re trying to do, based on your use case. I don’t know if it would be the masking layer, but if we’re talking about selective disclosure or privacy, there’s another layer in there that addresses that too, right?

Paul: Yes. If you look at data management as a three-step process: from data collection, data capture, data exchange… that second step of data capture is really important, because that’s where you rebuild the semantics for the data exchange. In that way, when it’s gone out the other end, if you like, it’s all beautiful for machine learning, etcetera. Some of the overlays that you use in that second stage, the capture stage, are really things like your human-readable labels, your predefined entries, or your formats. Some of the overlays that you might use on the exchange side might differ, so that’s what we’re looking at at the moment. When you talk about a masking overlay, that requires additional processing on perhaps certain attributes that you flagged in the schema base. Some of that masking might be more suited for the data exchange side, rather than the data capture side as a secondary process. We’re looking into all those nuances at the moment. I think that OCA has enough flexibility to be very granular on which overlays are used at, what stage of the data life cycle.

Mathieu: That makes sense. You touched on this earlier when we were talking about KERI being interesting for governance, because you’re not locked into a specific utility. Thinking about privacy, and governance around whatever needs to go into an ecosystem solution; this is where ecosystem builders would be able to integrate this architecture, and to be able to create the rule-set based on this, right?

Paul: Yes, absolutely. The privacy folks are almost a totally different community in themselves. When I talk about the ‘Master Mouse Model,’ which is our vision of a dynamic data economy, what we talk about is really the data layer. However, there’s actually an entirely new layer that goes on top of that, which is the jurisdictional layer. For example, is a company compliant with jurisdictional law to be able to operate within that sort of economy?

When you talk about services: are those services authenticated by a jurisdictional authority? Are they allowed to perform those services? Those sorts of legal things are almost like a different ecosystem altogether. When I was talking about the ‘trusted digital assistant’, that’s really where you’d have APIs from that jurisdictional layer, plugging into your trusted digital assistant. That way, you know that when you’re looking for certain services, they’re already legal and they’ve been authorized properly. Obviously, it shouldn’t be up to the human being to decide whether something’s authorized or not; that’s a legal component.

Mathieu: Yes. So, in a COVID credentials or vaccination credentials use case, based on the jurisdiction you’re in, you don’t want to allow anyone to breach privacy or share PII. It’s all built into the system based on the jurisdiction: if I’m in Canada, or in a specific province in Canada, versus if I’m in a U.S. state, or if I’m in France or wherever, you could have this built right in.

What other use cases do you find interesting in what you see now? You came from the media/music space, you have a lot of experience in pharma, you’ve been spending a lot of time with the COVID projects: what do you find super interesting, or where are you spending most of your time right now?

Paul: There are a couple of interesting things that we’re looking into. One of the projects that we built at the Human Colossus Foundation was a digital immunization passport. We haven’t pushed it that hard, because it’s actually working on brand new technologies that are not standards yet. I think there’s going to be a second wave of digital immunization passports; the first wave that goes out might come across a few issues with governance and all that sort of stuff. We’re sitting in on discussions about the next generation of immunization passports. One of the interesting things in that solution was trying to cryptographically link verifiable credentials to semantic containers.

Our view of a credential is that it should allow you to do something. When you have a credential on your mobile phone, you can show it to border control. They can see, “Oh, you’ve got a green tick mark; you’re good to go.” All of the sensitive health information data that sits behind that, we’ve been storing that in what we call a semantic container. That’s where the holder of the data can give access to that container using a token, and there is obviously a cryptographic link between the container and the credential. What’s interesting is that the credential’s great for saying,” Yes, this data has come from an authentic source.” Essentially, by cryptographically tying that credential to the container, you can say that anything in that container has been authenticated by me, and that has some interesting properties.

When you’re talking about the music industry, I think that for music publishers it could be really interesting. They’ve always had trouble with people copying MP3s and giving it to their friends; the artist doesn’t make any money. However, if you put an MP3 in a container and you’ve got a verifiable credential saying, “Yes, this container has been authenticated by AC/DC or whoever the band is”, then you can track it very well, using these decentralized technologies. So, if anybody is accessing that MP3; firstly, they need a token from, let’s say, the publisher acting on behalf of the band. That would probably be a good way to do it, because there’s always a provenance chain, back to where it came from. There’s an interesting technology that we’ve been looking at, called Digital Water-marking. Basically, it just changes the composition a tiny bit of the digital asset within the container. So, if somebody leaks that for any reason, without proper authorization to do it, you can track who leaked it. That’s super cool: it’s more of a defensive approach to data sharing. I think with all these things: the more protection you can have, the better. That’s just another method that we’re looking at, for how to stop illegal data sharing.

Mathieu: Is a good analogy that a credential is like a recipe item, but without a semantic container? That’s the meal; you’re able to put everything in together, you could take it from different places, and just have it as its own package.

Paul: Yes, you can think of it exactly like that. It’s a bundle of information; so it could be a usage policy, plus a huge audio file, plus a couple of attachments — that can all be put into a container. The credential is saying that everything in that container is authentic; you can take a cryptographic hash of the contents of the container, and also a cryptographic hash of the data capture structure that was used to capture that data. If either of those two items changes, the hash basically breaks, and then the credential is automatically nulled.

When you have a UPS package or something like that, the package is the container and the thing that you’ve signed to get the package; that’s your credential. That’s your way of authenticating that it’s yours, all under your control.

Community Activities

Mathieu: All of this work, and thinking, and pilots, and effort: you’re bringing all this to the Trust over I.P., and you seem to be working with Sovrin. Is it more specifically on the UX or guardianship side of things? Are you also involved with Kantara?

Paul: Yes, I’m involved in all of those communities in varying capacities. I work with the MyData community, all about personal data. I set up the ‘MyData Health’ thematic group over there, so it’s really about making sure that that health data is treated properly throughout the data life cycle; that’s one thing I do. For the COVID-19 credentials initiative, I run the schema task force there. We’re basically creating schema specifications for COVID-19 tests: that includes test reports, test certificates, vaccine reports, vaccine certificates, those sorts of things. At Sovrin, I lead the communications team. So, whenever you see blog posts going out from Sovrin, it’s usually pulled into our group. We’ll vet it, and make sure that it’s accurate and supportive of the community. And then, with Kantara, I work very closely with the ‘notice and consent’ group over there. Notice and consent is totally not my expertise, but I know how important that piece is to the whole ecosystem, so I’m trying my best to learn as much as I can. There are some amazing people in that space, who have as much knowledge in their space as I do in semantics. I’m totally in awe of those guys, as well.

Mathieu: For the different communities advocating for trusted data, or self-sovereign identity, or decentralized identity, however you call it; I think everyone has the same ethos of what we’re trying to do, and the community is amazing. Everyone is trying to help each other out; everyone’s trying to give. Honestly, we’re very fortunate to work with these types of people every day. It’s just amazing; it’s very motivating.

Paul: Absolutely. You’re dealing with people who have 25 years of identity experience, and 25 years of consent, and getting these people to the same table to agree on what a dynamic data economy should look like. I’m totally blown away by the level of knowledge.

Mathieu: To close, where could you use help? How could people help you to keep pushing the dynamic data economy forward, and all these things forward?

Paul: It’s very much a cross-community effort, really. The Human Colossus Foundation is pretty much as agnostic as you like; we don’t really have an agenda. We talk to all the communities, and we try hard to make sure that everybody has a place at the dinner table to put their objectives forward, for the good of the economy.

Moving forward, if anybody’s really interested in this space, I would suggest that the inputs and semantics working group within Trust over I.P. is a great place to join. You’ll get a very broad perspective on the economy from a lot of specialists all working together. For a quick fix on a lot of information, that’s a great place to go. At Trust over I.P., looking at the dual stack, there’s also space for governance across the governance stack, and also new technologies in the technology stack. I think Trust over I.P. is a great space to join. When it was first set up, there were a few murmurings that this is just Sovrin, rebranded. In reality, it’s much broader than that. Sovrin is a utility for self-sovereign identity and will probably always be seen as the genesis point of that whole ecosystem, but Trust over I.P. goes much broader than that. It’s self-sovereign identity, but it’s also decentralized consent, semantics, ecosystems — it’s a much broader space. The reason I say that is because for anybody that is uneasy about SSI or has any doubts about it: just go into Trust over I.P., and there will be a space for your expertise, for sure.

Mathieu: Agreed. Paul, thank you very much for doing this with me. It was a great conversation. I’m sure people are going to find this quite interesting and want to go a little deeper into the stack that you guys are bringing forward. I know this is an area that I’m quite interested in going into a lot deeper, so thank you for doing this!

Paul: My pleasure, thanks for having me; I really enjoyed it.